How Microsoft Conjured Up Real-Life Star Wars Holograms

By Brian Barrett as written on www.wired.com

Help me, HoloLens. You’re my only hope.

OK, so maybe it’s not quite time to write R2-D2 out of Star Wars quite yet. But Microsoft researchers have created something that brings one of the droid’s best tricks to our present-day lives. It’s called holoportation, and it could change how we communicate over long distances forever. Also, it makes for one hell of a demo.

It started, though, as a response to homesickness, says project lead Shahram Izadi. His Cambridge (UK)-based team, which focuses on 3D-sensor technologies and machine learning among other next-generation computing concerns, had spent two and a half years embedded with the HoloLens team in Redmond, Washington. Izadi is a father—that’s his daughter in the demo video—and when the time came to dream up the next challenge, they turned to the one that most affected their personal and professional lives during that stretch: communication.

“We have two young children, and there was really this sense of not really being able to communicate as effectively as we would have liked,” Izadi says. “Tools such as video conferencing, phone calls, are just not engaging enough for young children. It’s just not the same as physically being there.”

So they created something that, in several key ways, is. Holoportation, as the name implies, projects a live hologram of a person into another room, where they can interact with whomever’s present in real time as though they were actually there. In this way, it actually one-ups the classic Star Wars version, in which a recorded message appears in hologram form. Holoportation can do that, too, but the real magic is in what basically amounts to a holographic livestream.

As you might imagine, that takes a lot of horsepower.

I’ll Take You There

The Holoportation system starts with high-quality 3D-capture cameras placed strategically around a given space. Think of each of them as a Kinect camera with a serious power-up. “Kinect is designed to track the human skeleton,” says Izadi. “We’re really about capturing high quality detail of the human body, to reconstruct every feature. That has required a rethinking of the 3D sensor from the ground up.”

Once those cameras have captured every possible viewpoint, custom software stitches them together into one fully formed 3D model. This process is ongoing, Izadi says, as more frames of data make for a higher-quality model. The accumulated data results in an incredibly lifelike hologram that can be transported anywhere in the world that has a receiving system, like, say, a HoloLens. And it can do it fast.

“We want to do all of this processing in a tiny window, around 33 milliseconds to process all the data coming from all of the cameras at once, basically, and also create a temporal model, and then stream the data,” says Izadi, whose team leans on a small army of off-the-shelf (but high-end) Nvidia GPUs to crunch the relevant numbers.

But wait, you’re saying, that must be an insane amount of data to transmit. You’re right! Not only does the holoportation process generate mountains of data, Izadi points out that most streaming video codecs aren’t particularly 3D-friendly. That makes compression, which in this case transforms gigabytes into megabytes, a huge part of making holoportation work.

Aligning Worlds

To be clear, what you’re seeing in this video is real. It actually does work. There are still some hurdles to overcome before holoportation becomes a part of our everyday lives, though. You’ll notice, for instance, that the furniture in the two rooms that Microsoft uses is identical, making interaction much more seamless than it would be with the furniture from two rooms overlapping, or people walking through desks, and so on. Fortunately, there’s a straightforward solution: Train the cameras to only focus on the items you want to holoport, rather than an entire room.

“The user could potentially decide that they don’t want to replicate any furniture,” says Izadi. “We have this notion of background segmentation, where you capture the room just with the furniture in it, and that means that only the foreground object that goes into the room after will be of interest for the stream.” You could also strategically incorporate certain pieces of furniture by deciding how the two rooms align. Take, as an example, two grandparents holoporting in from their couch so that they can experience Christmas morning. Rather than let them float in mid-air, one could decide to orient their seated holograms onto the couch in their own living room.

This gets to be fairly heady stuff. For now, it’s probably enough to know that Izadi’s team is aware of potential spatial problems, and sees them instead as opportunities. After all, Izadi ultimately sees the project as a consumer device. We already have dedicated home theaters; why not dedicated home holoportation rooms, as well?

“The end goal and vision for the project is really to boil this down to something that’s as simple as a home cinema system” says Izadi. “You walk in to a number of these almost speaker-like units, in a way that you would set up surround sound, but this is giving you surround-vision.”

Long before then, maybe even within a couple of years, Izadi expects that you might find holoportation rigs in meeting rooms. And while it would be expensive—that’s a lot of cameras, and a lot of GPUs—he points out that global business travel costs about a trillion dollars a year. Holoportation starts to look a lot less expensive next to a round trip ticket to Shanghai.

Also intriguing? While Izadi’s team has worked closely with HoloLens, their system doesn’t play hardware favorites. All you’d really need to enjoy, at the receiving site, is an virtual reality or augmented reality headset.

“We’re very much agnostic to what we call the ‘viewer’ technology,” says Izadi. “Obviously we feel like there are some unique scenarios with HoloLens, but we would like to leverage as many display technologies as possible.”

Including, one suspects, plucky little droids.

Continued Reading

June 8, 2016

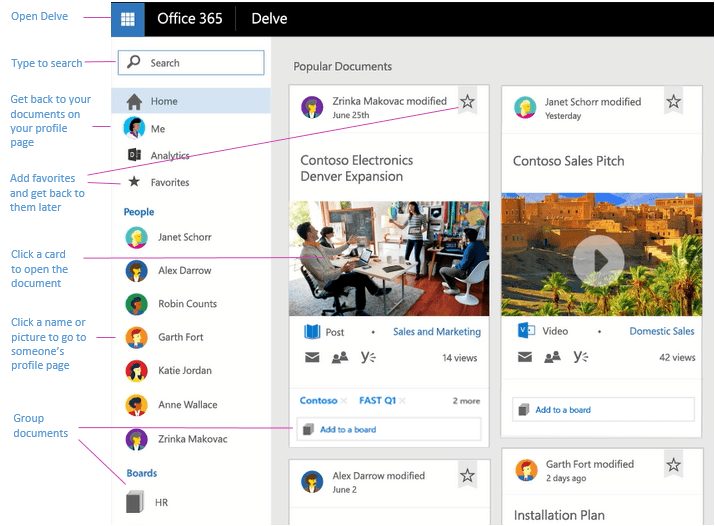

What is Office Delve?

[vc_row][vc_column][vc_column_text] What is Office Delve? As written on support.office.com Delve […]

LEARN MOREOffice365

June 8, 2016

National Best Friend's Day Made Easy with Yammer

[vc_row][vc_column][vc_column_text][vc_single_image image="9318" img_size="900x500" alignment="center"][vc_column_text] National Best Friend's Day Made Easy […]

LEARN MOREOffice365